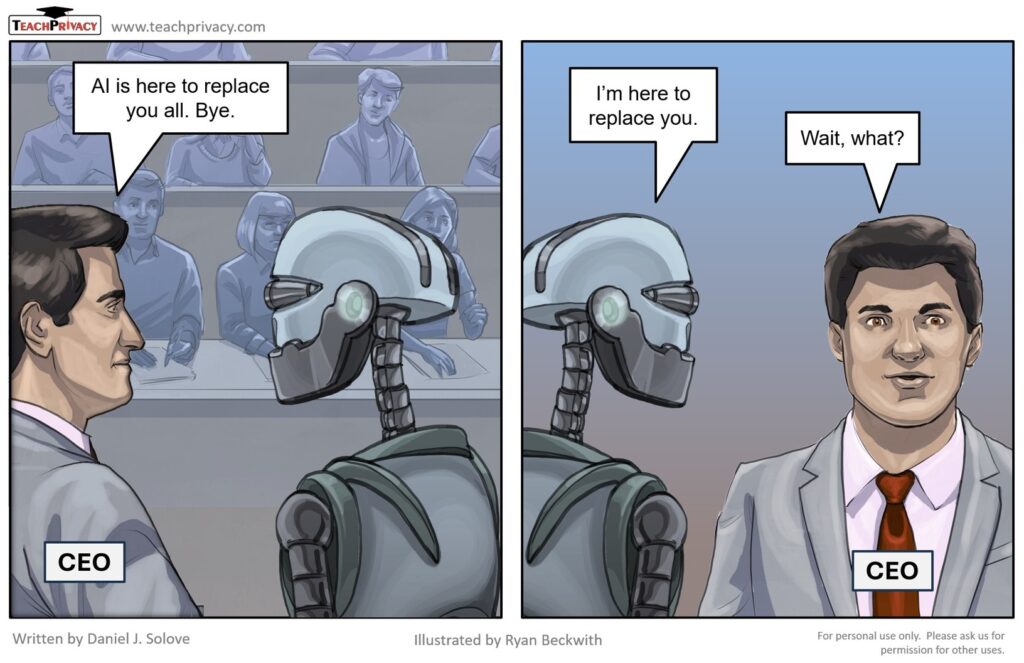

Here’s my latest cartoon – about AI job replacement.

Cartoon: AI Job Replacement

Posts containing Cartoons by Professor Daniel J. Solove for his blog at TeachPrivacy, a privacy awareness and security training company.

Here’s my latest cartoon – about AI job replacement.

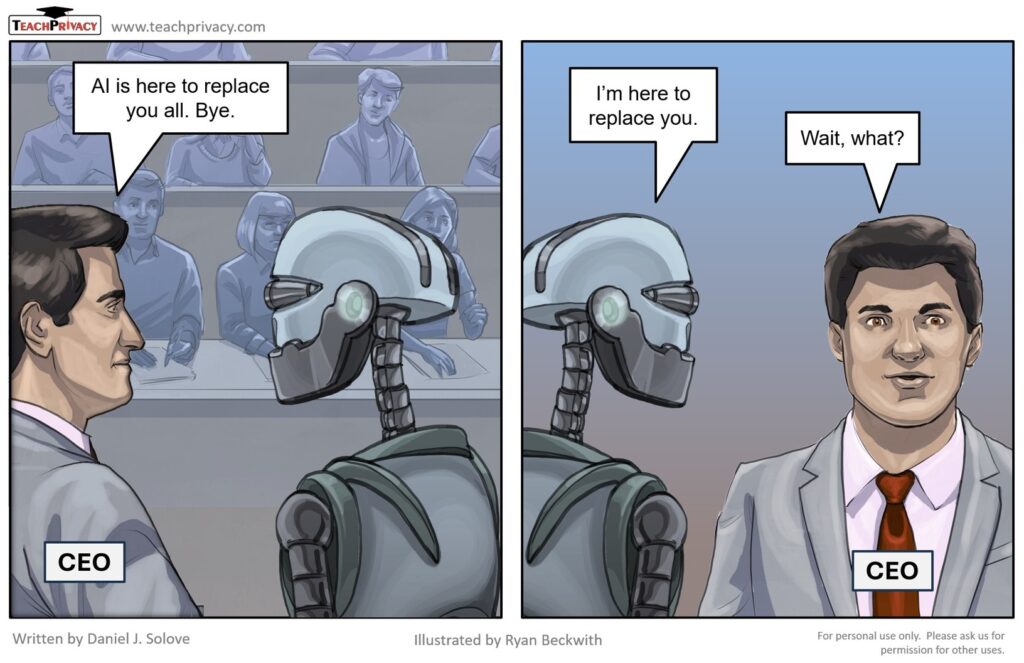

This cartoon illustrates a key point my new book, ON PRIVACY AND TECHNOLOGY — the law must establish the right incentives for technology to promote privacy, safety, and other important values: Only by establishing the right legal structure and the right incentives can the law succeed in holding creators and users of technology accountable. Instead, the […]

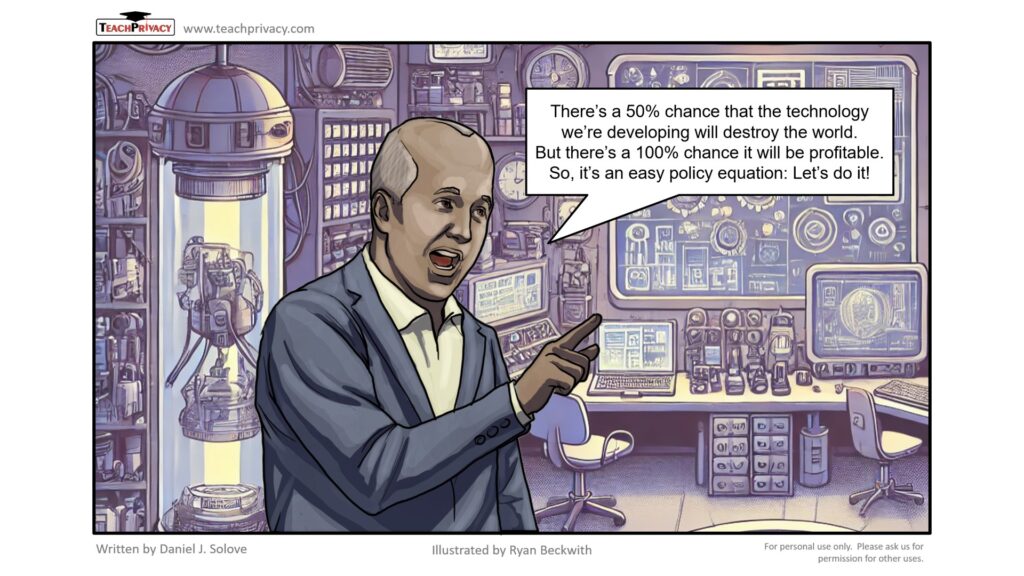

Here’s a cartoon about individual privacy rights. As I argued in my article, The Limitations of Privacy Rights, 98 Notre Dame Law Review 975 (2023), privacy law puts far to much onus on individuals to protect their privacy through exercising privacy rights such as rights to access, correct, object, or delete. These rights are time-consuming to […]

Here’s a new privacy cartoon for you to enjoy, along with a selection of my favorite classics from the archive. Enjoy the laughs! If the Real World Were Like the Internet

A new cartoon to capture the experience of browsing the internet.

A new cartoon for the times we live in . . .

Here’s a cartoon on AI predictions. With Hideyuki Matsumi, I have written quite critically of the use of AI algorithmic predictions for human behavior: The Prediction Society: Algorithms and the Problems of Forecasting the Future 2025 University of Illinois Law Review (forthcoming 2025) (with Hideyuki Matsumi)

Remember back in 2023, when AI company CEOs called for AI regulation? This cartoon is based on that call and what happened thereafter. In 2023, Open AI CEO Sam Altman testified before Congress about the need for AI regulation. A group of AI company leaders encouraged AI regulation in a closed-door Senate meeting. Elon Musk […]

A cartoon for the holidays. Additional holiday goodies: Stocking stuffer for kids (ages 7-12) – my children’s book about privacy, The Eyemonger Pre-order my new book about privacy, On Privacy and Technology (Feb. 2025) – it’s mercifully concise, about 120 pages! If you want to keep up with my writings, cartoons, events, etc., please subscribe […]

Here’s a roundup of my cartoons and blog posts for 2024. CARTOONS Notice and Choice Personal Data